Summary

Using Node.js and React components, create a web app that can be your personal translator. The app uses IBM® Watson™ Speech to Text, Watson Language Translator, and Watson Text to Speech services to transcribe, translate, and synthesize from your microphone to your headphones.

Description

Built with React components and a Node.js server, the language translator web app captures audio input and streams it to a Watson Speech to Text service. As the input speech is transcribed, it is sent to a Watson Language Translator service to be translated into the language you select. The transcribed and translated text are both displayed by the app in real time. Each completed phrase is sent to the Watson Text to Speech service to be spoken in your choice of locale-specific voices.

The best way to understand what is real-time transcription/translation versus “completed phrase” vocalization is to try it out. You’ll notice that the text is updated as words and phrases are completed and become better understood in context. To avoid backtracking or overlapping audio, only completed phrases are vocalized. These are typically short sentences or utterances where a pause indicates a break.

For the best live experience, wear headphones to listen to the translated version of what your microphone is listening to. Alternatively, you can use the toggle buttons to record and transcribe first without translating. When ready, select a language and voice, and then enable translation (and speech).

When you have completed this code pattern, you understand how to:

- Stream audio to the Watson Speech to Text service using a WebSocket

- Use the Watson Language Translator service with a REST API

- Retrieve and play audio from the Watson Speech to Text service using a REST API

- Integrate the Watson Speech to Text, Watson Language Translator, and Watson Text to Speech service in a web app

- Use React components and a Node.js server

Note: This code pattern includes instructions for running Watson services on IBM Cloud or with the Watson API Kit on IBM Cloud Pak for Data.

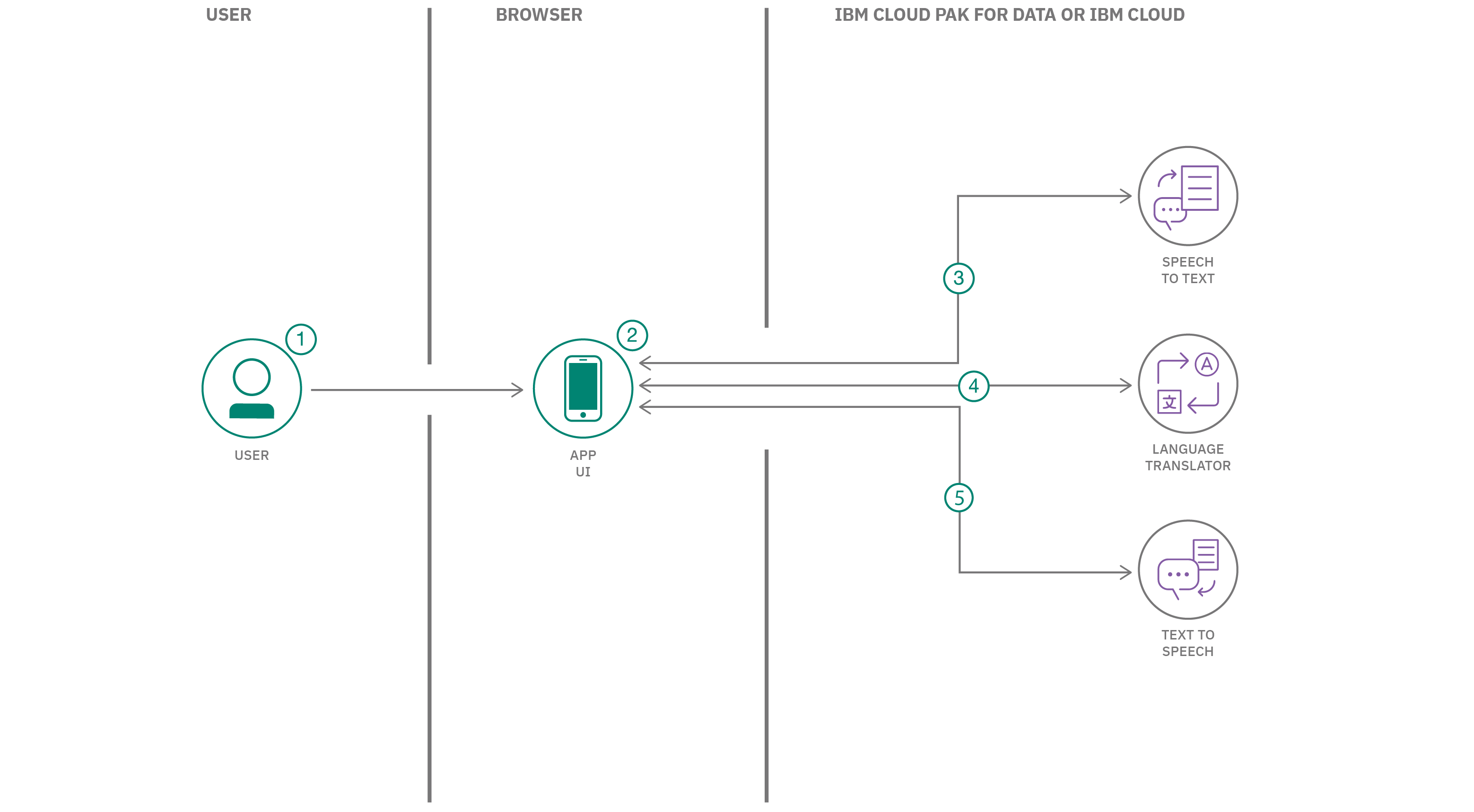

Flow

- User presses the microphone button and captures the input audio.

- The audio is streamed to the Speech to Text service using a WebSocket.

- The transcribed text from the Speech to Text service is displayed and updated.

- The transcribed text is sent to Language Translator and the translated text is displayed and updated.

- Completed phrases are sent to Text to Speech and the result audio is automatically played.

Find the detailed steps for this pattern in the README file. The steps show you how to:

- Provision the Watson services.

- Deploy the server.

- Use the web app.

Mark Sturdevant