The Apple Glass smartglasses or an Apple-produced VR or AR headset could take advantage of other hardware to determine where it is and movements three-dimensional space, by sharing data about the local environment.

One of the problems VR and AR headset producers have to contend with is the need to know where the head-mounted Is in an environment. This is especially important for augmented reality applications, as viewing systems that overlay a digital object over a real-world scene have to ensure that the positioning of the object in the user’s view is absolutely correct, to sell the illusion of its existence in the real world.

Headsets have multiple different ways to track their position, including accelerometers and cameras that point out into the environment to track nearby items.

However, in cases where there’s multiple people with head-mounted vision systems, or multiple iPhone users with ARKit apps as currently observable, each device typically handles their own co-ordinate-tracking system. Devices typically don’t share coordinates with each other during use, and generally work independently of each other.

In a patent granted by the US Patent and Trademark Office on Tuesday titled “Method and device for synchronizing augmented reality coordinate systems,” Apple suggests a way to get all devices to metaphorically sing from the same songbook and to work with each other, by synchronizing data.

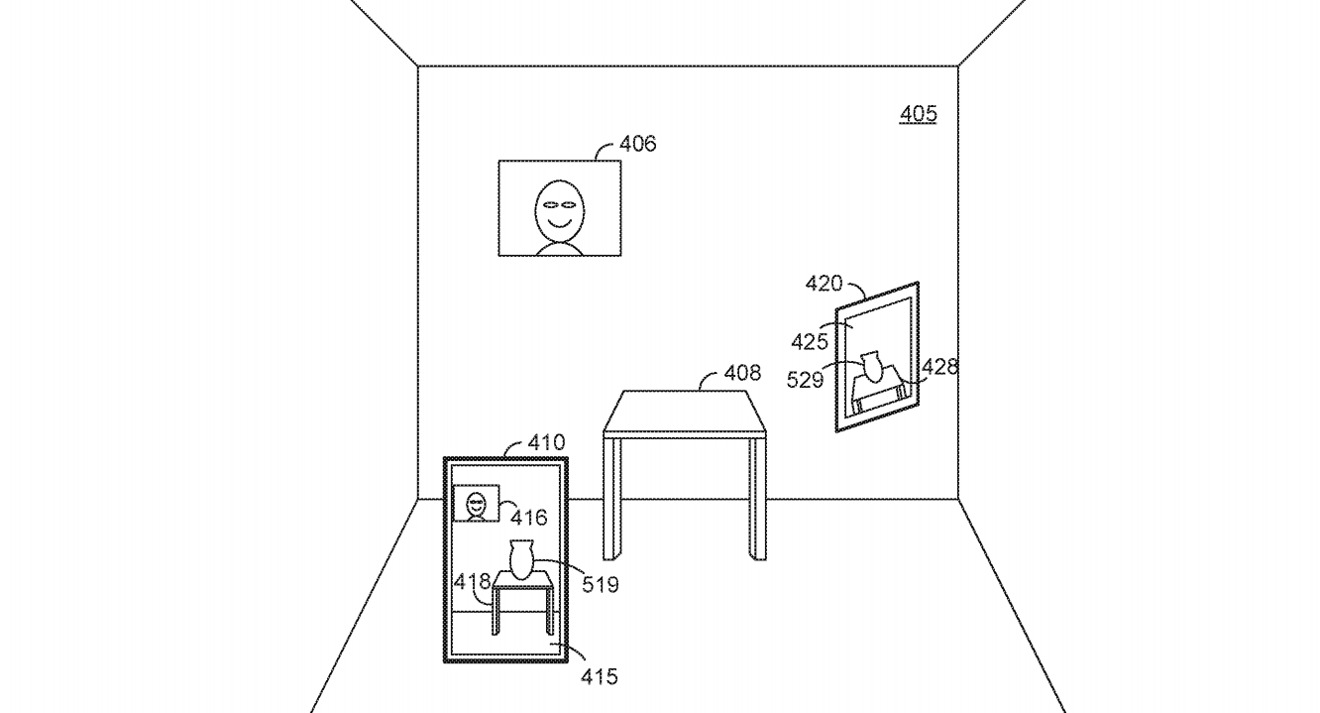

The idea initially starts with one electronic device handing over multiple items in a “feature set” to a second device, such as an iPhone or a base station transmitting data to an AR headset. This feature set can include a reference location in 3D space, coordinates for each of the devices, and in some versions, a map of the 3D space and points of interest within it that could be used to place virtual objects.

The second device can use this data as a starting point for an AR experience for the user, without needing to map the area itself beforehand. The second device could still gather its own data and update the feature set it has received, as well as transmitting data back to the first device about its updated location and any other pertinent data.

Multiple devices could be fed the same data to have identical AR experiences

The handing back and forth of data can allow for other features to be created, such as the first device providing updated 3D maps for an area based on the second device’s new location.

While the system has obvious applications in AR and VR, such as guiding visitors around a museum, there’s also alternative ways of the idea being used, and not necessarily in a larger system. The way the patent is described, it could help enhance Apple Glass, Apple’s rumored smartglasses, in another way.

As data including 3D mapping information is being transferred between devices, it could be possible for it to be generated by an iPhone or another device, then passed over to Apple Glass itself. This would in theory cut down on the amount of processing and sensors required on Apple Glass, enabling it to be made in a less bulky and more attractive form.

Apple files numerous patent applications on a weekly basis, but while the existence of a filing indicates areas of interest for Apple’s research and development efforts, they don’t guarantee the existence of a feature in a future product or service.

The patent lists its inventors as Michael E. Buerli, Andreas Moeller, and Michael Kuhn. It was originally filed on June 22, 2018.

Data sharing between devices for AR purposes has cropped up previously in patents, including one from August for “Multiple user simultaneous localization and mapping (SLAM).” In it, Apple proposed combining data from multiple devices surveying an object or scene together, to create a map quickly and more accurately by splitting the workload.

Earlier filings about inter-device communications for AR and VR purposes include a “Privacy Screen” that uses AR to show information only to a user and not to anyone else, and an AR headset may automatically unlock an iPhone if a user is nearby and about to look at it.