A series of patent applications show that Apple is focused on how users can work in an augmented reality or virtual environment, with the company working on practical sides of how to make that space feel more real.

Maybe we’ll get goggles first, or maybe “Apple Glass” will be as slim and stylish as we hope. Ultimately, though, Apple is seeing Apple AR and VR as being used for more than the games we see in goggles, or the notifications we might see in glasses.

Instead, it’s focusing on how users could get work done within an environment that is partly or wholly computer generated. That includes how we might use applications that only exist in our AR world, it includes how sound can help make an all-encompassing experience. And it also looks at how we look around, how we can find our way when half our view is virtual and half is real.

Audio in AR

One of the newly revealed patent applications, “3D audio rendering using volumetric audio rendering and scripted audio level-of-detail,” wants to help users experience a more natural-seeming environment. It does this through how we may hear the sounds of the AR world, and Apple is also concerned with how programmers can make better, easier use of audio.

“Computer programmers use 2D and 3D graphics rendering and animation infrastructure as a convenient means for rapid software application development…, ” begins Apple. “One challenge for such graphical frameworks is that graphical programs such as games often require audio features that must be determined in real time based on non-deterministic or random actions of various objects in a scene.”

A river can’t be rendered as a single point of sound

So a game might have an explosion, but if you’re looking away from that, the sound of it has to appear behind you. “Incorporating audio features in the graphical framework often requires significant time and resources to determine how the audio features should change when the objects in a scene change,” says the patent application.

Apple says that currently sounds are tied to specific points in the environment, such as that explosion being placed where the correct graphics are. But while this is typical of AR, it’s not always ideal and it is always complicated.

“[It] usually means that an application is required to generate points for each of various sounds that exist in the virtual audio environment,” says Apple. “This process is complex, and current approaches are typically ad-hoc.”

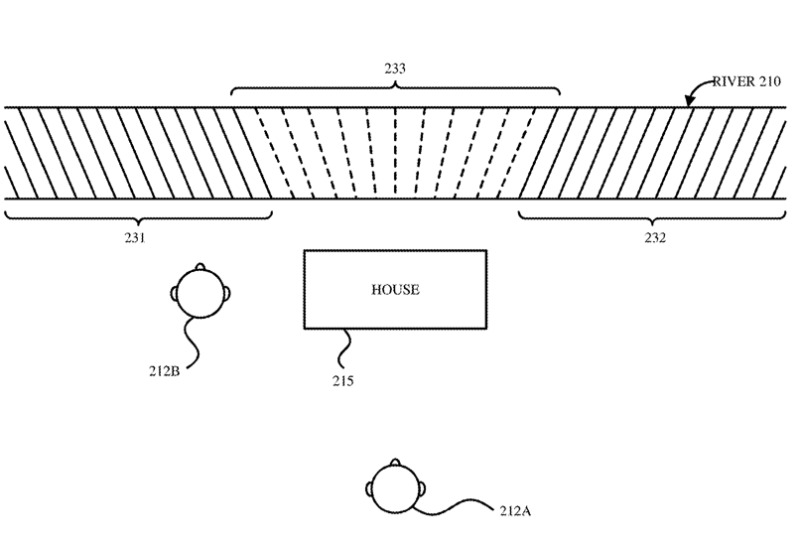

The drawings in the patent application show, as an example, a user being faced by an AR river. There is no one single point of origin for the sounds of the water, it is a continuous area, a region within the AR environment.

Apple’s solution is to “represent a sound source in a 3D virtual audio environment as a geometric volume, rather than as a point.” The patent application discusses many methods and issues to do with replacing a point with an overall region, but the purpose is always to create this more natural sound environment.

It could also be something that Apple AR itself, as the kind of AR operating system, might provide instead of every developer having to create it.

“This makes it possible for an audio rendering engine, rather than an application program, to use the geometric volume of the object to render a more realistic audio environment,” says Apple.

Directing your attention

If there were an explosion in a game, you are most likely to look in its direction but it is entirely up to you. Apart from when there are specific movements, or sounds, though, AR can present a rather unvarying view.

As you look through any AR system, Apple says that what you can see will range “from wholly synthetic experiences to barely perceptible computer-generated media content superimposed on real-world stimuli.” You might have an entire world in VR, or you could have the smallest of “turn here” signs as you walk down a real street.

Apple’s system could allow an AR application to put a glow around where you’re supposed to look

An issue that Apple wants to address with another patent application, is how the user knows where to look. When they are surrounded by VR, or when there is a single AR object by them, there needs to be a way to direct the user’s attention when needed.

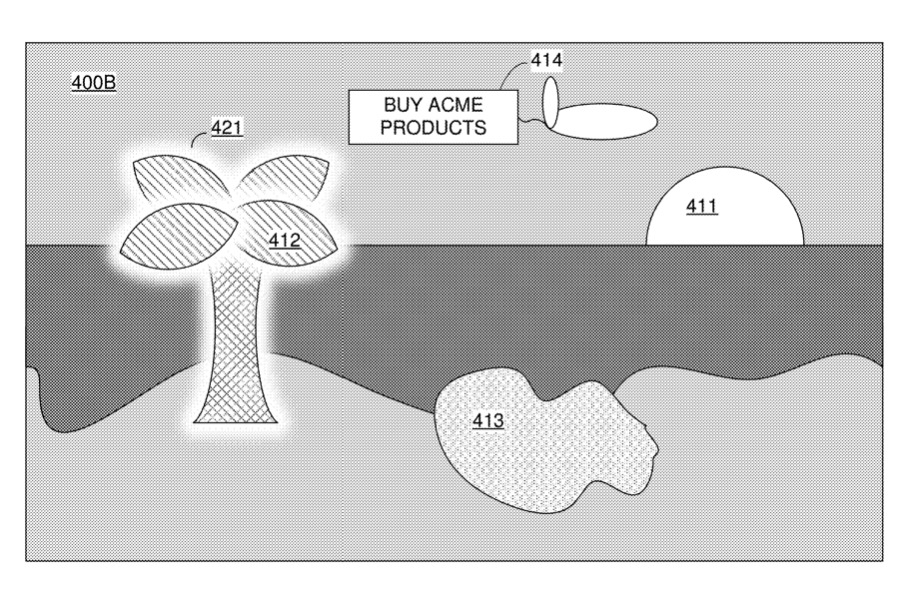

“Attention direction on optical passthrough displays,” concentrates on AR where the computer-generated reality (CGR) of what you’re seeing is a combination of the real world and this superimposed media content.

“[It] may be desirable to direct the attention of a user in a CGR environment to an object or area of the CGR environment,” says Apple. “For example, a game executed by an HMD [head-mounted display] presenting the CGR environment may desire to direct the user’s attention to a game objective.”

“As another example, a map application executed by the HMD may desire to direct the user’s attention to a map destination,” it continues.

Interestingly, Apple is also looking at when it would be best to prevent a user’s attention being drawn to something. “[It] may be desirable to direct the attention of a user away from an object or area of the CGR environment, such as an advertisement or inappropriate context.”

Whatever the motivation for attracting attention or avoiding it, Apple’s proposal comes down very broadly to two options. It can display “noise in areas of the CGR environment where attention of the user is not to be directed,” for instance.

Or it can use what Apple describes as masking. If an application needs a user to look at an AR pot plant on a table, that plant is a graphical image superimposed onto reality. An attention system might add a masking element “behind” the object, and then add a glow to it, for instance.

Working tools in AR

One item that is very likely to be present in an AR environment, and to require a user’s attention, is an application, a tool. AR can turn any surface into a calculator, for instance, or a screen.

A third patent application, newly uncovered, is simply called “Displaying Applications,” and it involves making an AR tool fit into the environment around the user. “The techniques include determining a position of a physical object on a physical surface,” says Apple, “[and] displaying a representation of an application in a simulated reality setting.”

How real objects could be made AR tools

It also involves “modifying attributes of the representation of the application in response to detecting changes in the position of the physical object on the physical surface,” such as when an object is picked up.

This is similar to the recently revealed patent application regarding turning surfaces into AR controls. This new application is also credited to Samuel Lee Iglesias, whose related work includes using trackpads to manipulate AR objects.

Apple’s plans for AR

As noted by AppleInsider before, Apple’s AR work is clearly not confined to one single product. Rather, the company seems to be focusing on it becoming a huge shift in how all of us use our devices.

It’s possibly still the case that a head-mounted display is automatically associated with gaming, but Apple is putting considerable effort into making a technology we can all actually work with.